Selected Aspects of Document Synchronization in Distributed Systems

- Processes, standards and quality

- Technologies

- Others

Two of the projects in which I have participated recently, were distributed systems based on the document replication. Each of them was slightly different, which affected the solutions. In this article I’m going to present briefly the main assumptions and requirements for each of the projects, as well as the implemented solutions. I’m also going to mention a few important problems that we had to deal with.

General description of the systems

Distributed system for clinics management (HCS)

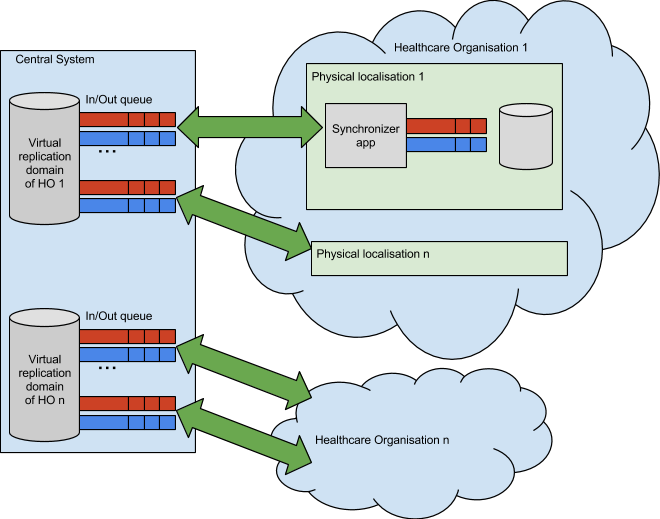

- Distributed system with one central node and multiple remote ones

- Data security within a medical company – not disclosing data to other companies

- Central safe backup

- A document can be changed only in one place at a given time

- Safe sensitive data encryption – to be read only by authorised Health Care Institutions

- Possibility of creating documents’ replication domains

- Transparency for the documents in different versions of an application

- Ensuring the right order of document replication for the purpose of the correct deserialization to relational model

- The possibility of adding new branches of medical companies – downloading only their data

Distributed document multimedia database for the company operating cruise ships – Digital Asset DataBase (DADB)

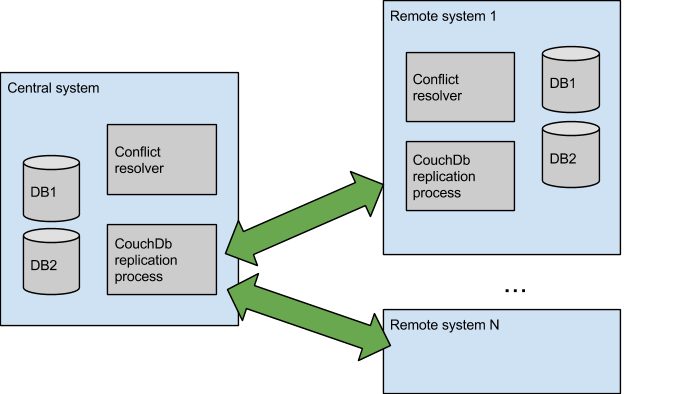

- Distributed system with one central node and multiple remote ones

- Possibility of working in the conditions of significantly limited connection (satellite transmission); minimalizing transmitted data

- Possibility of simultaneous changes in every document in every place

- Transcoding of multimedia content in order to obtain lighter versions of large objects and thumbnails

- No possibility of communication between remote nodes

- Possibility to control the position of the binary content for particular assets

- User permissions to given documents

Both systems were therefore quite different and consequently during the development of each we could observe various solutions.

| Advantages | Disadvantages |

| Tailor-made The product you will receive will be one of a kind – suited to you, your company and your pocket. It will do exactly what you need it to, exactly how you want it to. Nothing more, nothing less. | Higher initial costs As bespoke system needs to be developed to match the needs of your business, it will cost more initially. What is more, sometimes you will end up with underestimated budgets, increasing the costs further. |

| Ease of use Bespoke system requires less supervision and results in fewer errors. It increases productivity and is easy to understand, because it incorporates your own business know-how. | Long waiting time Software development requires not only money, but also time and effort. The more complex a solution you need, the longer will it take to build it for you. The more you get into the project, the more complicated – and expensive – it becomes. |

| Flexibility Since you are in control of a scalable solution, it can be amended over time to match your new criteria. And as your business changes, the system can change too, boosting your performance. | Risk of instability When not developed according to best standards – and especially when the budget and deadlines are tight – the software could be done in a rush and thus be unreliable and bug-ridden. |

| Safety and security Bespoke development increases not only cyber safety of the system, but also operational security of your company. As you own the solution, you are not tied to a specific vendor, nor forced to pay monthly per-user fees. | |

| Competitive advantage A tailor-made system can give you the upper hand, as your competitors won’t have access to the same solutions. And as it becomes your company’s asset, it adds more value to your business. |

Encountered difficulties

Multimaster – solving conflicts

With the DADB solution, the master-master replication enables the edition of the same document on different nodes at the same time. Consequently, we have two competing versions of one document and we need to pick the “correct” one in a predictable way, which results in losing the data. Due to the specificity of the data stored in DADB it is not a critical issue and therefore such solution is acceptable. Note though that any loss of the data is unacceptable in such systems as HCS.

Data migration

If we update the version of the application on one of the nodes and if it includes changes in the document schema, it is necessary to migrate the data. These two systems have approached this problem differently:

1. DADB

– The replication between nodes with different versions of a data scheme is blocked

– The migration of all the documents stored in the database on a given node is necessary

2. HCS

– The replication of documents of different versions is possible

– Each node can read documents in older versions

– If a document in a newer version appears, it goes to a queue which is processed while the node is being upgraded

In the long term, it seems that the HCS approach is better and more flexible.

Documents queuing

HCS has one queue of sent/received documents, which ensures data integrity during the whole process but at the same time it prevents simultaneous transmission of documents.

On the contrary, CouchDb used in DADP replicates each database independently and simultaneously, which speeds up the whole process.

In the HCS a problem arises when the document, which is being sent, contains a structure error. The processing of a queue is then blocked and manual intervention is required. Such situation is ignored by CouchDB with DADB and consequently the documents are kept being sent. On the one hand it is preferable as the process is not blocked, but on the other hand the error is propagated to other nodes which hinders possible repair in the future.

Unavailability of a node at a given moment

In both systems the unavailability of the central node is a critical issue and interrupts the process of data synchronization.

Because of the specification of master-master, the unavailability of any other node is acceptable in DADB. Documents can be edited at any place and time.

There is a property tag for a given document in HCS which causes the overhead on the request to transfer properties between nodes. As long as a node ‘owner’ is not available, a document cannot be edited.

DADB – replication asynchronism

CouchDb replicates every database individually. It is of benefit especially if one of them contains large documents, stored for a long time or if there are many documents to be sent. However, it causes the problem with temporary data incoherence.

DADB – transmitting binary data

In addition to regular documents, DADB handles the binary content. It is transmitted independently from documents. It was necessary to deal with a small bandwidth and to introduce an additional item, ‘binary content event’, which is replicated in the same way as documents, and describes the changes in the content: source URL, change and deletion.

Summary and conclusions

Distributed systems prolong the time of development process and increase its complexity significantly. Also, testing and debugging get considerably complicated e.g. to simulate a problem with schema incompatibility it is necessary to build and run the application in different versions, and create data of various structure.

Considering both development and maintenance, it is important to prepare system in such a way that diagnosing failures is as simple as possible Most important here are comprehensive logs containing information on which user’s request caused a given action. It would be preferable if such information was also propagated to other nodes which would allow tracking the history of changes propagation.

Having dealt with the CouchDB replication in DADB it seems that the most convenient solution is to separate the aspects of storing the data on a node and its replication. In the long term, leaving everything to CouchDB would create an extra work with, among others, handling the data in the version that differs from a supported one.

What is most important is to find the right solution to a given problem. Medical and personal data are very crucial in HCS, which results in different solutions than in the case of DADB, which can allow temporary incoherence (“eventual consistency”) and even the loss of some data.